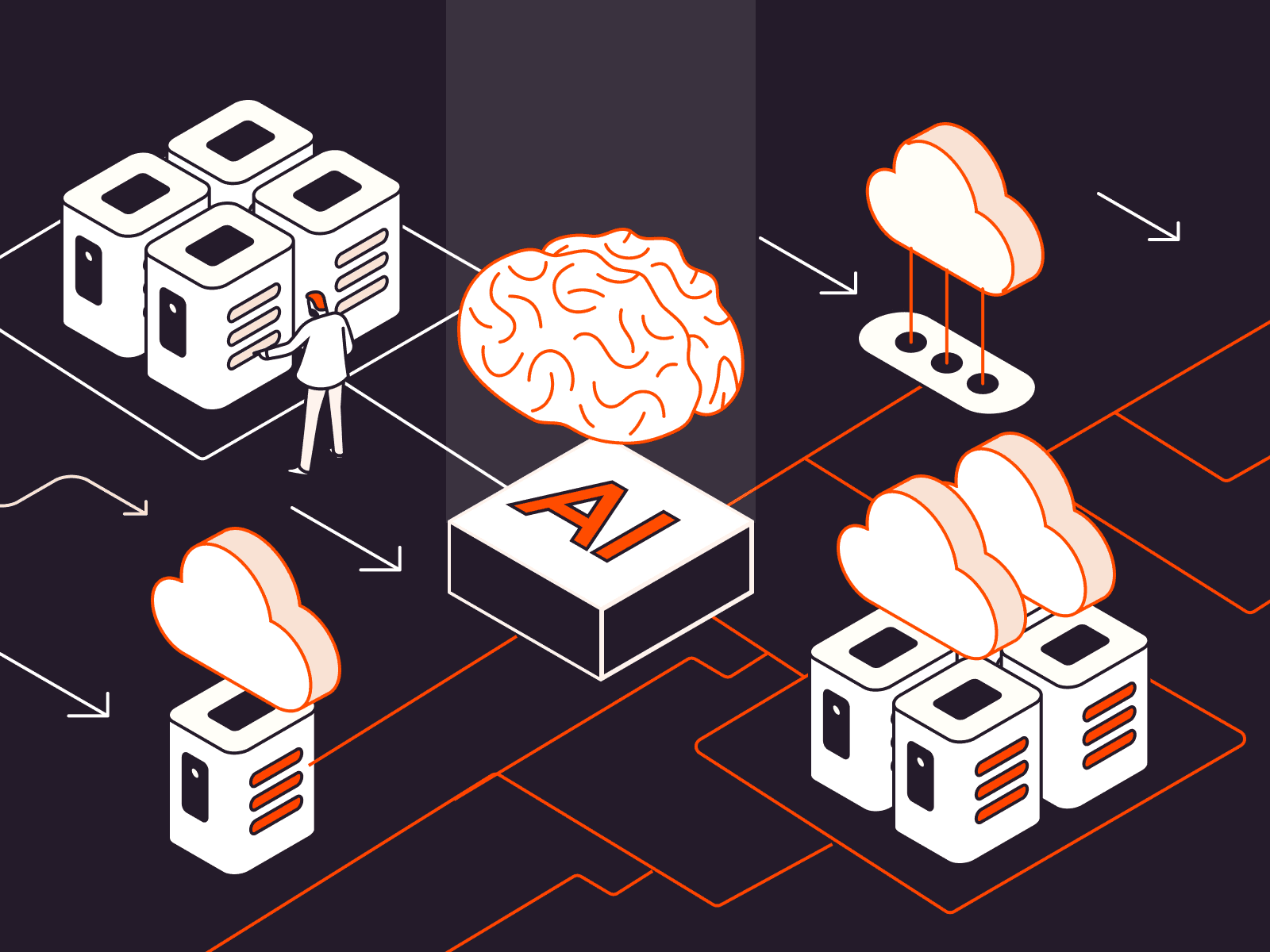

A Practical Blueprint for Scalable, Future-Proof AI Deployment

The enterprise shift toward AI is no longer a matter of “if”—it’s a matter of “how fast.” But while the potential of AI is clear, building the right infrastructure to support it is anything but simple. It requires more than just powerful GPUs or a few lines of machine learning code. True AI maturity depends on a well-architected foundation: high-performance storage, flexible cloud compute, and seamless integration across systems and workflows.

For IT leaders, the challenge lies in aligning these elements to deliver performance, scalability, and manageability—without introducing complexity or cost overrun. Here’s how to do it right.

1. Intelligent Storage: The Backbone of AI Workloads

AI runs on data—massive volumes of it. Whether you’re training large language models (LLMs), analyzing real-time data streams, or running predictive analytics, storage performance can make or break your pipeline.

Modern AI workloads demand:

-

High-throughput, low-latency storage to feed models at the speed of compute.

-

Tiered architecture to balance cost and performance (e.g., NVMe for hot data, object storage for archival).

-

Data management tools for versioning, lineage tracking, and rapid retrieval across training and inference environments.

Enterprises should look for storage systems that support:

-

Scalability without downtime

-

AI-native features such as automated tiering, data compression, and security hardening

-

Unified access across file, block, and object protocols

Bonus: AI-ready storage with built-in ransomware protection and real-time anomaly detection ensures that your most valuable data is safe and compliant.

2. Cloud Compute: Flexibility Meets Performance

The compute layer is where AI models are trained, validated, and deployed. And while on-prem GPUs have their place, cloud-based compute delivers elasticity and speed that’s often essential for modern workflows.

A successful cloud strategy includes:

-

Hybrid or multi-cloud architecture to avoid vendor lock-in and optimize workloads by cost, performance, or regulatory needs

-

Support for AI-specific instances (e.g., NVIDIA A100s, TPU pods) that are optimized for parallel processing

-

Model lifecycle orchestration, from training to deployment, using tools like MLflow, Kubernetes, or Ray

Moreover, many cloud providers now offer AI accelerators, pre-trained models, and managed services to jumpstart projects—ideal for teams scaling fast with limited internal expertise.

3. Integration: Unifying Tools, Data, and Teams

Even the most powerful hardware and cloud resources will underperform if not integrated correctly. AI infrastructure must be deeply connected to your existing systems, development pipelines, and security frameworks.

What to prioritize:

-

API-first platforms that can easily plug into your CI/CD workflows

-

Real-time data pipelines that sync across systems—whether it’s IoT devices, CRM systems, or third-party datasets

-

AIOps capabilities to monitor infrastructure, optimize performance, and flag issues automatically

Integration isn’t just about tools—it’s about people. Encourage collaboration between data engineers, ML teams, and IT admins through shared platforms and governance models. Unified dashboards, role-based access, and workflow automation go a long way in breaking down silos.

A Future-Ready Approach: Design for Scale, Don’t Patch for Growth

Too often, enterprises start with one AI project and end up patching infrastructure as demands evolve. This leads to fragmentation, duplicated cost, and security risks.

Instead, plan for growth from day one:

-

Start small, scale smart — choose modular components that can grow with demand.

-

Invest in observability — use AI-driven monitoring to optimize resources and control costs.

-

Design for interoperability — ensure your tools and platforms can evolve without lock-in.

By architecting your AI infrastructure around intelligent storage, flexible cloud, and seamless integration, you’re not just preparing for today’s workloads—you’re future-proofing for what’s next.

Final Thoughts

AI success isn’t defined by flashy models or short-term results. It’s built on solid infrastructure that empowers your teams to innovate continuously, move fast, and stay secure.

With the right blueprint—intelligent storage as the foundation, cloud compute as the engine, and integration as the glue—your enterprise can unlock the true power of AI.

Need help evaluating platforms or vendors? I can help draft a framework or RFP checklist next. Let me know!

Leave a Reply